Step into the future and discover how next-generation intelligence is fundamentally reshaping our world, driving unprecedented innovation, and unlocking the next chapter of human potential.

The origins of artificial intelligence (AI) date back to the 1940s, when attempts were made to describe the human nervous system using mathematical models. Its development has been unbroken since then, yet gradual, as if we were climbing a staircase, pausing longer on some steps and shorter on others. The pace of development has accelerated over the past 20 years, and we are climbing the stairs faster and faster.

Currently, in 2026, it is clear to everyone that AI is transforming (and has already transformed) our lives and the economy, but it is also evident that progress has slowed, and the enormous energy demands of current models and the difficulties of training them are major problems.

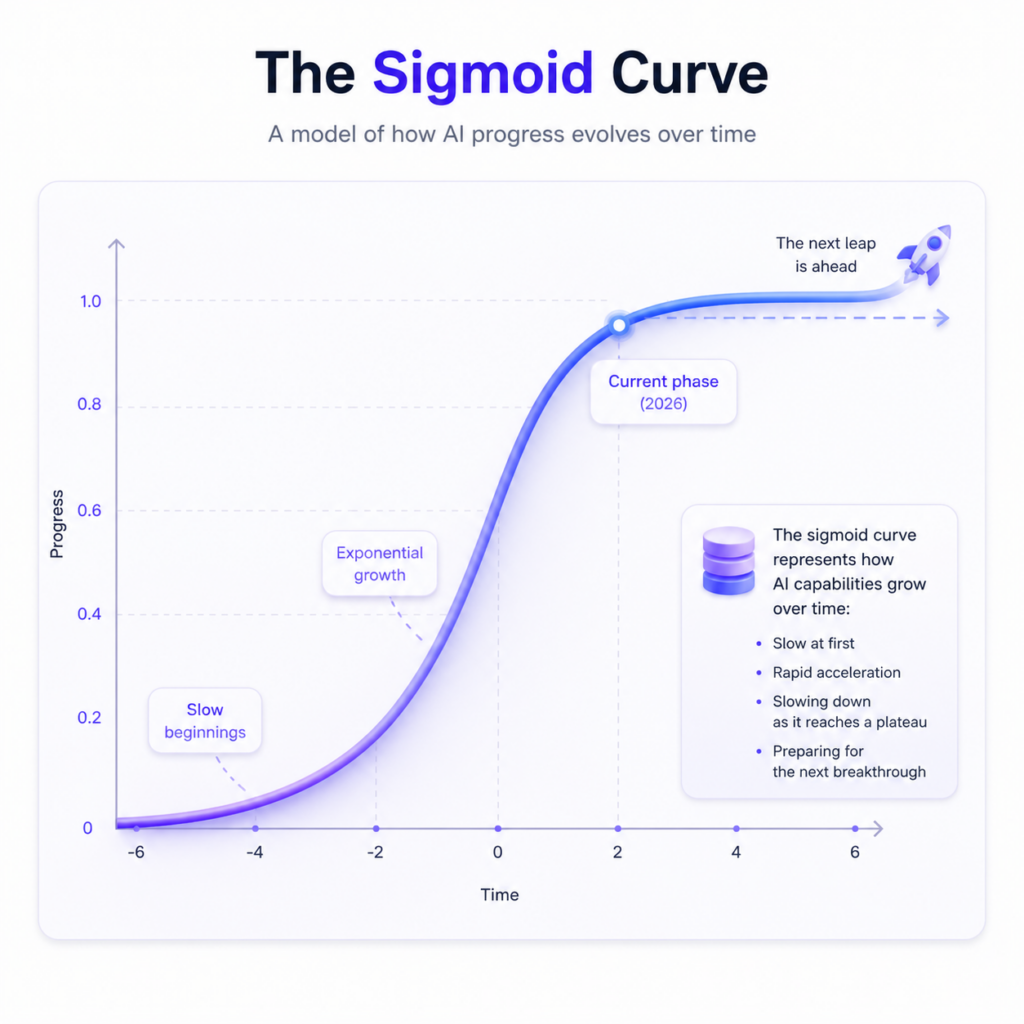

Two or three years ago, Silicon Valley was abuzz with the idea that exponential growth had begun and we were moving unstoppably toward Artificial General Intelligence (AGI). Even back then, more cautious researchers were talking about a sigmoid curve, as seen on the figure below, and we were in its exponential phase, but now, in 2026, it is clear that the curve is beginning to reach a plateau. With large language models, one plateau is the limited availability of content, but other technological barriers also constrain current progress, as discussed later on.

So the expansion of AI has slowed down, but everyone is certain that progress will continue, and they are looking for opportunities for the next sigmoid leap.

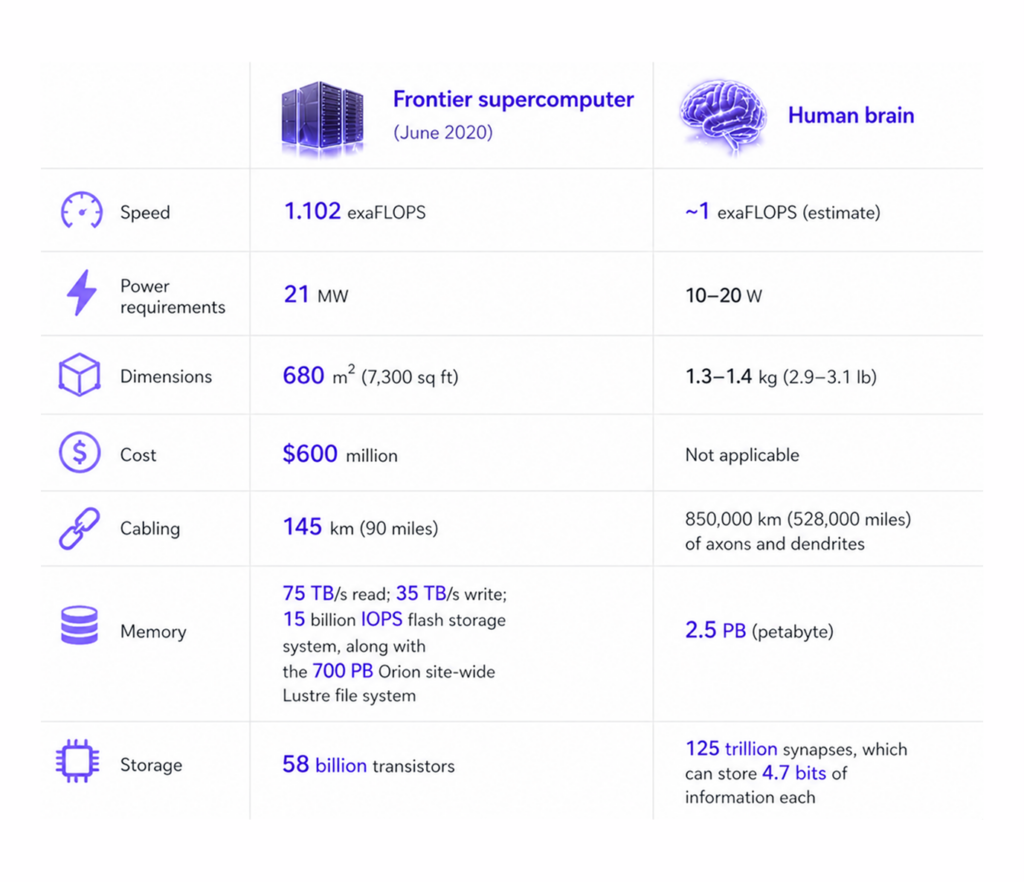

Since the human brain still operates far more efficiently than even the most advanced large language models and consumes as little energy as an energy-saving light bulb, the ReBuildAI research team is confident that the momentum needed for the next leap forward will come from the field of biology. In the beginning, the emulation of biology set artificial intelligence on its path to conquest (only to veer off in a different direction along the way). Now, by returning to the nervous system, leveraging nearly 100 years of neuroscience knowledge, and building new mathematical and biophysical models, we can make our current models more energy-efficient and effective by orders of magnitude.

Today’s artificial intelligence is typically based on open-loop systems: it processes, generates, and responds. We are researching the foundations of intelligent systems that learn, adapt, and evolve through feedback (closed-loop systems).

We do not believe that science alone or business alone is capable of the next sigmoid leap; therefore, we have provided business support to a multidisciplinary research team (medicine, biology, biophysics, mathematics, theoretical physics) to freely brainstorm, test individual ideas on real systems, and jointly find the right path to uniting science and business. Since the human brain is approximately 100,000 times more efficient and orders of magnitude more energy-efficient than current Transformer models. Our assumption is that as long as we are on the right track, our systems must be visibly more efficient and energy-efficient, so we cannot be heading in the wrong direction (of course, it is very important that all of this take place in a safe environment where a potential singularity cannot cause irreversible damage).

Just as the human immune system protects our bodies, we are building a protective system around the RebuildAI system to safeguard it against misuse and restrict the algorithms - protect the system and protect the world from the system.

At this stage of the project, we are simultaneously exploring several avenues to identify biologically inspired architectures where we can achieve a significant increase in algorithmic efficiency

We are working hard to rewrite the algorithms that underpin current AI applications and make them available to the world

the most extensively researched biologically inspired model to date, with numerous modifications

We are also testing various neural networks and deep-learning systems

We are also testing adaptations of Transformer models based on our ideas

Real neurons perform the computations in a cultured brain tissue. This system helps us understand the difference between the functioning of the real brain and that of SNNs, but with the right inputs, this could potentially be a good direction as well.

Glia-Neuron Ratio: Higher intelligence correlates with a higher glia-to-neuron ratio; research aims to incorporate glia into mathematical brain models.

Dendritic Computation: Enhancing models by integrating the complex, local signal processing that occurs within dendrites.

Rapid Decision-Making: The brain makes a quick initial choice and attempts to validate it; it only switches to a different model if the first one fails to fit.

Drumming Model: A method of encoding visual information using rhythmic beat patterns.

The Role of Noise: Utilizing inherent biological noise to improve the efficiency and adaptability of neural networks.

Genetic Algorithms: Applying non-linear optimization methods to evolve and refine neural learning processes.

While the human brain processes arithmetic slower than computers, it excels in parallel processing and handling uncertainty, offering a 2,500 TB storage capacity. Operating on just 20 watts, the brain generalizes effectively from minimal data, whereas AI requires massive datasets and immense energy for similar tasks.

The journey of AI began with the 1950s Perceptron, a simple model of a single neuron that could only handle basic tasks. The real breakthrough arrived in the 1980s with Multi-Layer Perceptrons and the backpropagation algorithm. This method, which earned Geoffrey Hinton the 2024 Nobel Prize, allows networks to learn by calculating errors and adjusting internal weights backward through layers. While this „backprop” fueled today’s deep learning revolution, it is incredibly inefficient, requiring massive computing power and energy. Critics argue that because biological brains don’t actually use this method, we should pivot toward lighter, more efficient algorithms inspired by nature’s own „local” learning rules.