Artificial intelligence (AI) traces its origins back to the 1940s, when researchers first attempted to model the human nervous system mathematically.

Since then, progress has been continuous but not linear.

AI has evolved step by step, sometimes accelerating, sometimes pausing like climbing a staircase.

Over the past 20 years, this pace has dramatically increased.

Just a few years ago, the dominant narrative especially in Silicon Valleywas that AI had entered an unstoppable exponential phase, rapidly approaching Artificial General Intelligence (AGI).

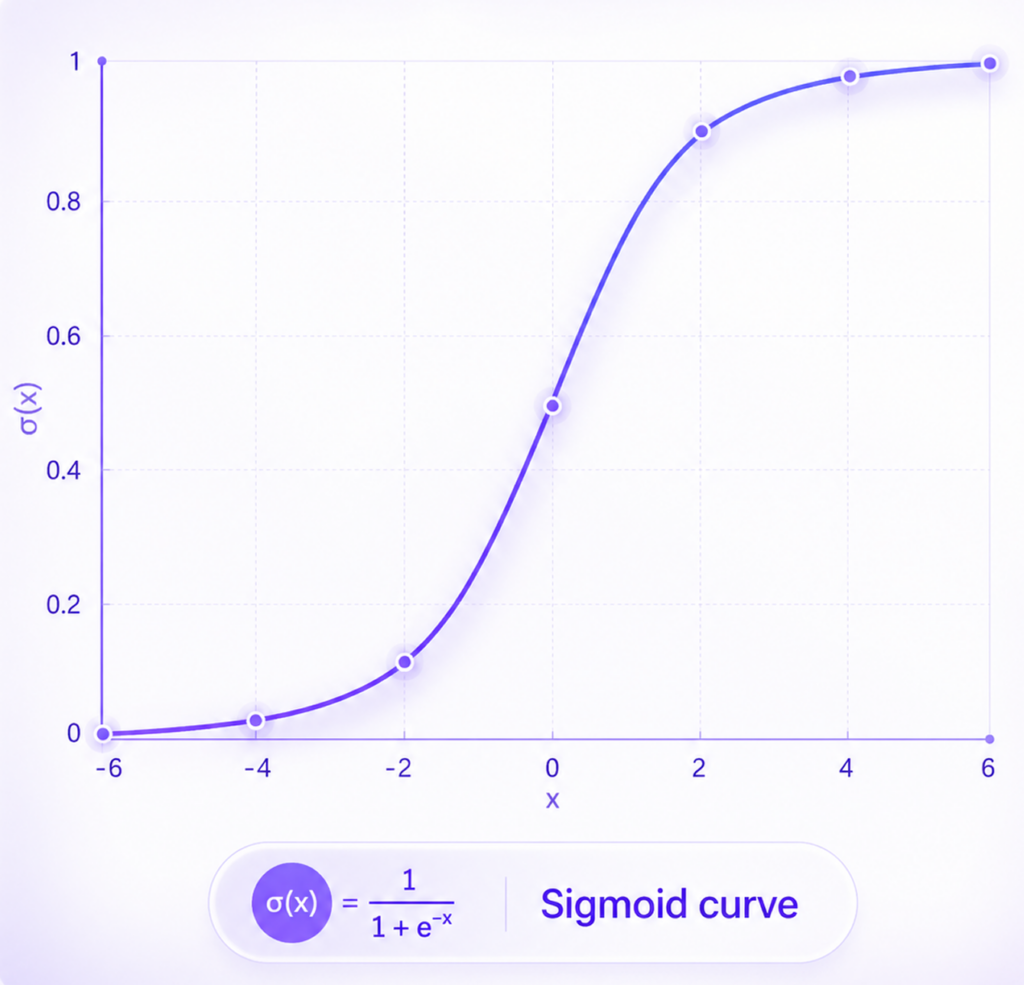

More cautious researchers, however, described this growth as a sigmoid curve.

AI progress has not stopped but it is slowing and searching for its next leap.

The next major breakthrough in AI will come from biology.

AI itself was originally inspired by the brain but over time, it diverged.

We believe it is time to return.

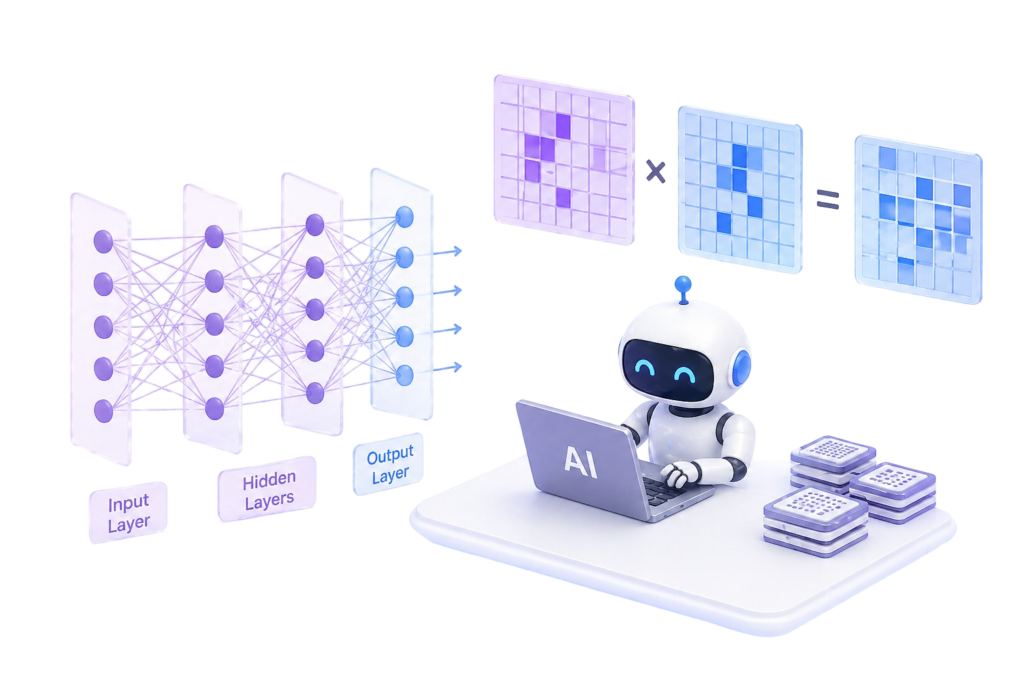

The foundations of modern AI date back to the Perceptron model of the 1950s.

Later, in the 1980s, backpropagation made multi-layer neural networks trainable triggering the deep learning revolution.

This work, recognized with the 2024 Nobel Prize awarded to Geoffrey Hinton and John Hopfield, was undeniably transformative.

However, it introduced a critical constraint:

Training requires massive matrix operations → enormous computational cost

Biological intelligence does not rely on backpropagation.

Energy inefficiency & scaling limitations